- Archives

- 2012 Volume 9 Number 1 January- March

- In the Shadows of Patient Safety Addressing Diagnostic Errors in Clinical Practice: Heuristics and Cognitive Dispositions to Respond

In the Shadows of Patient Safety Addressing Diagnostic Errors in Clinical Practice: Heuristics and Cognitive Dispositions to Respond

Christina J. Ansted, MPH, CCMEP

This article was reprinted with permission. Courtesy of CME Outfitters, LLC, 1395 Piccard Drive, Suite 370,

Rockville, MD 20850. www.cmeoutfitters.com

Introduction

Real solutions to the role of cognitive error in medical misdiagnosis are a challenge to identify; therefore, strategies for improving patient safety tend to focus on more easily attainable objectives related to the more familiar issues regarding medical errors.

(1) However, diagnostic errors that impact patient safety are reported to be relatively common and to result in substantial harm to patients. (2) Evidence from autopsy studies indicates that thousands of patients die every year because of missed or delayed diagnoses. These errors are attributable, in many patients, to cognitive errors or biases on the part of the diagnosing physician, who may be unaware of these factors. We have entered a time of diagnostic errors being viewed as “next frontier” in patient safety. (3)

Diagnostic errors have been a neglected topic in patient safety, accounting for 17% of preventable errors in hospitalized patients. (2) Fortunately, the tide is changing and the Institute of Medicine

(IOM), the American Board of Medical Specialties (ABMS), and the Accreditation Council for Graduate Medical Education (ACGME) have refocused the medical community on patient safety as a core issue in medical education. Diagnostic errors can be defined as any diagnosis that was a) unintentionally delayed—sufficient information was available earlier or pre-ascertainment bias was employed by the physician; b) wrong—another diagnosis was made before the correct one; or c) Missed—no diagnosis was ever made. (2) Approximately 1 in 250 patients experiences a diagnostic error, most of which are considered preventable.

Poor teamwork, system errors and intra-professional communication among clinicians have been identified as predisposing factors for diagnostic error in emergency medicine and surgery. The specialties in which diagnostic uncertainty is most evident and in which delayed or missed diagnoses are most likely are internal, family, and emergency medicine. However, all specialties are vulnerable to diagnostic errors. (1) Zwaan and colleagues also noted that lack of knowledge, inappropriate application of knowledge, inadequate information transfer, urgency of decision-making, and lack of supervision all contribute to diagnostic errors. (3, 4)

Finally, heuristics also plays a major role in medical misdiagnoses. Clinicians follow a set of informal rules when faced with a diagnostic challenge. These rules, which are often formed through experience or trial and error, provide the clinician with “a cognitive shortcut” in the face of complex, multilayered situations. These rules serve an important purpose in providing clinicians with a familiar framework from which to make clinical decisions. Unfortunately, the use of heuristics can also lead to errors, leading the clinician down the wrong path. This process is also known as cognitive dispositions to respond (CDRs), and it occurs when a clinician decides on a single diagnosis and fails to fully consider other diagnostic possibilities. The correct diagnosis is therefore often missed as clinicians continue to “make up their minds” and begin to treat what ends up being the wrong condition. What is especially alarming is that, too often, these heuristic mistakes are never realized during a patient’s life, and are only discovered post mortem.

Clinicians must take care not to fall into heuristic traps by applying solutions that involve “better thinking about their thinking” (or metacognition) and cognitive “debiasing” (or removing the stigma of bias) when making diagnostic conclusions.(1,5) “Cases linked to diagnostic errors appear to be on the rise as primary care doctors, struggling with heavy case loads, take shortcuts or don’t act on their patient’s symptoms,” says Robert Hanscom, Vice President of Loss Prevention and Patient Safety for Crico/RMF, a malpractice insurer that covers Harvard University-affiliated hospitals and doctors. Doctors are missing an enormous opportunity to save, prolong, or improve lives, avoid waste in the health care system, and save money.(6) Medical education in this context can help clinicians shed light in the darkened corners of diagnostic errors, and provide strategic solutions for everyday practice that will improve patient safety at the earliest points of care across all disciplines.

Heuristics/Cognitive Dispositions to Respond (CDR)

“Diagnostic errors may be less visible and dramatic than getting the wrong leg cut off, but a delay in diagnosis can adversely affect a patient’s long-term outcome. […] Let us drill down and learn deeper lessons, like what could have been done differently.”

—Gordon Schiff, MD, Associate Director of PatientSafety Research at Brigham and Women’s Hospital.

The greatest challenge in the reduction of diagnostic errors lies in minimizing cognitive errors, and specifically, the biases and failed heuristics that underlie them.(1) Several types of errors that clinicians commonly make because of incorrect applications of heuristics are defined by the following cognitive biases(7):

-Availability heuristic: Diagnosis of current patient biased by experience with past cases

-Anchoring heuristic/premature closure: Relying on initial diagnostic impression regardless of information to the contrary

-Framing effects: Diagnostic decision-making biased by subtle cues

-Blind obedience: Placing undue reliance on test results or “expert opinion”

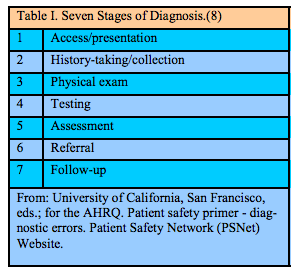

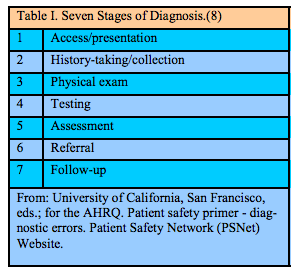

Cognitive error is very much in play not only in medical misdiagnosis, but also in patient safety issues related to medication errors; however, in medication error, a structured approach to error identification provides a workable framework. One powerful heuristic in medication safety is the delineation of steps in the medication-use process (i.e., prescribing, transcribing, dispensing, administering, and monitoring) to help identify where an error has occurred.(8) Diagnosis is difficult to neatly classify because the stages are concurrent, recurrent, and complex, but it can be divided into seven stages (see Table I). This framework may prove helpful in organizing discussions, aggregating cases, and targeting areas for improvement and research by health care providers working to minimize the occurrence of diagnostic errors.(8)

Clinicians are generally unaware of diagnostic errors that they have committed, particularly if they do not have an opportunity to receive feedback or follow patients over time. Therefore, regular feedback to clinicians on their diagnostic acumen or performance is essential. (7) The principal goal is to engage clinicians in a meta-cognitive process in which they reflect on their own thinking and the possible role of cognitive biases, including faulty heuristics. Such reflection may assist clinicians in catching some of their own misuse of heuristics before they cause harm. (7)

Cognitive dispositions to respond (CDRs) to particular situations in predictable ways can lead to diagnostic errors. An understanding of why clinicians have particular CDRs in certain clinical situations throws considerable light on cognitive diagnostic errors. The most important strategy lies in familiarizing clinicians with the various types of CDRs that exist and ways to avoid them.

In addition to acknowledging that CDRs underlie diagnostic cognitive error, it is imperative to also search for effective debiasing techniques.(1) There is a substantial amount of research occurring in this area, yet no gold standard for debiasing has emerged. However, we can, for now, encourage clinicians to evaluate their individual use of typical heuristic biases or CDRs by applying these techniques in reflection and in clinical practice, a tactic that alone has been proven to decrease medical misdiagnosis.

Doctors all too often miss the right diagnosis because they engage in a form of cognitive error known as “premature closure”—settling on one diagnosis without considering alternatives. They could ask themselves instead, “What else could this be?” or “What is the worst thing that could be going on?”(5) Studies over the past 40 years have shown that even well trained and highly competent doctors are capable of diagnostic errors that occur because of cognitive shortcuts often taken in the name of efficiency. Many errors occur when doctors are too quick to come to a decision (“this is definitely a case of pneumonia”), and then defend that judgment too vigorously even in the face of contradictory evidence. (9) The take-home message for clinicians? Do not take having the right diagnosis for granted—check and recheck (a common theme to avoid medical errors) to make sure the diagnosis is correct.

Faulty Information-Gathering and Suboptimal Communication

“Timely diagnosis can be decisive in determining whether patients experience major adverse outcomes. Hence, while diagnosis error remains more in the shadows than in the spotlight of patient safety, this aspect of clinical medicine is clearly vulnerable to well documented failures and warrants an examination through the lens of modern patient safety and quality improvement principles.”(8)

Communication breakdowns contribute to diagnostic error. These can involve patient-to-physician communication or communication among clinicians and other health care providers. (10) In more than 10% of cases involving diagnostic error, the first evaluating physician missed the diagnosis or arrived at an incorrect diagnosis, most commonly because of failure to take an adequate history.(11) Failure to diagnose due to missing information is another core issue seen in case reviews and medical literature. Critical information can be missed because of cognition biases and errors including failures in vital patient-physician communication skills; lack of access to medical records; failures in the transmission or review of diagnostic test results; or faulty organization of records (either paper or electronic) that created problems for quickly reviewing or finding needed information (system errors).

Information “overload” is another problem certain to grow as more and more clinical information is stored online, where the patient’s condition can be “lost” in a sea of information. (8) Simply creating and maintaining a patient problem list can help prevent diagnosis errors. However, making this documentation tool operational has been unsuccessful in most institutions, even ones with advanced electronic information systems. This problem represents a challenge—the consistent application of documentation advances to clinical practice. (8)

Another aspect of diagnostic assessment is the need to recognize the urgency of diagnoses and complications. The failure to make the exact diagnosis is often less important than correctly assessing the urgency of the patient’s illness, which can occur due to suboptimal communication. (8)

Clinicians and patients must be able to discuss concerns in an efficient and productive manner. Cases need to be reviewed in sufficient detail to make them “real.” Firsthand clinical information often radically changes clinician understanding from what the more superficial “first story” suggested. Additionally, and perhaps most importantly, patients should be engaged with their clinicians on multiple levels to become “coproducers” in a safer practice of medical diagnosis. (8)

Access to and Follow-Up on Abnormal Test Results

“Medicine is often a crapshoot and an odds game, and doctors can miss a diagnosis even if they adhere to guidelines on when to order a test. Reducing diagnostic errors will require a focus on larger system failures, such as preventing lab results from getting lost and developing checklists to help doctors distinguish between, say, a ‘low-risk’ headache and a ‘high-risk’ headache.”— Peter J. Pronovost, MD, PhD, FCCM

Failures of communication, especially access to and follow-up on abnormal diagnostic test results, can lead to errors, adverse events, and liability claims. To address this challenge, The Joint Commission has prioritized safe and timely communication of critical test results as a National Patient Safety Goal (NPSG.02.03.01), which requires clinicians to “report critical results of tests and diagnostic procedures on a timely basis.” However, although the NPSGs are well known, this goal remains one of the most commonly cited areas of nonadherence among clinicians. (12) An article by Singh and Vij provides eight recommendations for improving communication of abnormal test results within health care institutions; these recommendations serve as a valuable resource for clinicians in practice. (12)

Growing evidence shows that delays in diagnosis constitute a common medical error and represent a significant threat to patient safety.(13) Problems in the test-result reporting system are often related to the mishandling of abnormal test results (“missed results”). Ensuring that a requested test has been completed and integrated into the plan of care involves both multiple steps and individuals. Poon and associates found that, on average, a full-time clinician is currently responsible for reviewing one thousand test results each week.(13) The vast majority of these results will be normal and most of those that are abnormal do not require any specific clinician response. However, given the volume of information that clinicians both generate and review, it is becoming increasingly clear that more robust solutions are needed. (14)

Failure (or inability) to promptly follow up on abnormal test results may lead to diagnostic errors and other safety problems. However, a study conducted in Veterans Affairs clinics found that 1 in 10 alerts for abnormal laboratory test results went unread by providers, and a large proportion of those patients did not receive timely clinical follow-up. (15) Studies completed by the Veterans Administration (VA) show that doctors are often overwhelmed by alerts and may not follow up, even when an alert says the test is abnormal. (16) Hardeep Singh, MD, Chief of the Health Quality and Policy Program at Houston’s VA Research Center, says that the VA studies “also show that if both a primary-care doctor and a specialist get test results, each assumes the other will follow up. Patients may think that if something was wrong, my doctor would have told me,” says Dr. Singh. “But no news is not necessarily good news, and patients need to be empowered to follow up on their lab results and participate more actively in their care.”(16)

Clinical Connections

-Apply solutions that involve “better thinking about your thinking” (or metacognition) and cognitive “debiasing” (or removing the stigma of bias) when making diagnostic conclusions.

-Consider using the “Seven Stages of Diagnosis” to minimize the occurrence of diagnostic errors.

-Familiarize yourself with the various types of cognitive dispositions to respond (CDRs) that exist and ways to avoid them.

-Do not take having the right diagnosis for granted—check and recheck to make sure the diagnosis is correct.

-Take the time to gather an accurate patient history.

-Create and maintain a patient problem list. Review and consider Joint Commission recommendations for improving communication of abnormal test results within health care institutions.

-Promptly follow up on abnormal test results.

—Do not assume that the patient’s other doctor—whether the PCP or the Specialist—will follow up.

—Encourage patients to inquire after their test results and become more involved in their care.

References

1. Croskerry P. The importance of cognitive errors in diagnosis and strategies to minimize them. Acad Med 2003; 78:775-780. PubMed ID: 12915363.

2. Trowbridge R, Salvador D. Addressing diagnostic errors: an institutional approach. Focus Pt Safety 2010; 13:1-2, 5. Patient Safety Network (PSNet) Website. http://www.psnet.ahrq.gov/ resource.aspx? ResourceID=19544 Accessed March 18, 2011.

3. Zwaan L, de Bruijne M, Wagner C, et al. Patient record review of the incidence, consequences, and causes of diagnostic adverse events. Arch Intern Med 2010; 170:1015-1021. PubMed ID: 20585065.

4. Thomas EJ, Brennan T. Diagnostic adverse events: on to chapter 2: comment on Patient record review of the incidence, consequences, and causes of diagnostic adverse events. Arch Intern Med 2010; 170:1021-1022.

5. Wachter RM. Why diagnostic errors don’t get any respect— and what can be done about them. Health Aff (Millwood) 2010; 29:1605-1610. PubMed ID: 20585066.

6. Clark C. 5 Tips for avoiding diagnostic errors. HealthLeaders Media Website. http://www.healthleadersmedia.com/ content/COM-256179/5-Tips-for-Avoiding-Diagnostic-Errors. Published September 8, 2010. Accessed March 18, 2011.

7. University of California, San Francisco, eds.; for the Agency for Healthcare Research and Quality [AHRQ]. Patient safety primer diagnostic errors. Patient Safety Network (PSNet) Website. http://psnet.ahrq.gov/primer.aspx?primerID=12. Accessed March 18, 2011.

8. Schiff GD, Kim S, Abrams R, et al. Diagnosing diagnosis errors: lessons from a multi-institutional collaborative project. In: Henriksen K, Battles JB, Marks ES, et al., eds. Advances in Patient Safety: From Research to Implementation. Volume 2: Concepts and Methodology. Rockville, MD; U.S. Agency for Healthcare Research and Quality [AHRQ]; 2005. AHRQ Website. http://www.ahrq.gov/downloads/pub/advances/vol2/ Schiff.pdf. Accessed April 8, 2011.

9. Wachter R. Patient safety: how to understand and prevent medical mistakes. Version 16. Knol. Knol Google Website. http://knol.google.com/k/robert-wachter/patient-safety/ I8d6CVRe/NRSyrQ. Published February 12, 2009. Accessed

April 6, 2011.

10. Graber ML. Educational strategies to reduce diagnostic error: can you teach this stuff? Adv Health Sci Educ Theory Pract 2009; 14:63-69. PubMed ID: 19669922.

11. Singh H, Thomas EJ, Khan MM, Petersen LA. Identifying diagnostic errors in primary care using an electronic screening algorithm. Arch Intern Med 2007; 167:302-308. PubMed ID: 17296888.

12. Singh H, Vij MS. Eight recommendations for policies for communicating abnormal test results. Joint Comm J Qual Pt Safety 2010; 36:224-232.

13. Poon EG, Wang SJ, Gandhi TK, Bates DW, Kuperman GJ. Design and implementation of a comprehensive outpatient Results Manager. J Biomed Inform 2003; 36:80-91. PubMed ID: 14552849.

14. Wahls TL, Cram PM. The frequency of missed test results and associated treatment delays in a highly computerized health system. BMC Fam Pract 2007; 8:32. PubMed ID: 17519017.

15. Singh H, Thomas EJ, Sittig DF, et al. Notification of abnormal lab test results in an electronic medical record: do any safety concerns remain? Am J Med 2010;123:238-244. PubMed ID: 20193832.

16. Landro L. The Informed Patient: What the doctor missed: using malpractice claims to help physicians avoid diagnostic mistakes, delays. The Wall Street Journal Website. http:// online.wsj.com/article/ SB10001424052748703694204575517834198205438.html#articleTa bs%3Darticle. Published September 27, 2010. Accessed March 17, 2011.

Christina J. Ansted, MPH, CCMEP holds a Bachelor of Science degree from the University of Vermont, with a minor in applied design, and a Masters of Public Health from New York Medical College and has worked in the field of medical education since 2001